Free AI Porn Generator: Why “Free” Is the Most Dangerous Word in the Search

Why This Keyword Gets So Much Traffic

The search term free AI porn generator attracts attention because it combines three things people click on fast: AI, adult content, and the promise of getting something for nothing. But the real issue behind the keyword is not convenience. It is risk. Regulators and major platforms increasingly treat synthetic sexual content as part of a bigger problem involving deception, impersonation, and non-consensual abuse. The European Commission’s guidance on Article 50 of the AI Act says transparency obligations apply to certain generative, interactive, and deepfake systems to reduce risks such as deception and impersonation.

What People Usually Mean by a “Free AI Porn Generator”

In plain language, people searching this term are usually looking for a tool that can generate explicit synthetic images or sexualized content from prompts, uploaded photos, or character settings. That is exactly why the category is controversial. Once a service can create sexual imagery from text or photos, it can also be misused to fabricate fake nudes, sexualize real people without permission, or produce exploitative material that spreads quickly across search, social, and messaging platforms. Meta said in June 2025 that it sued the company behind CrushAI apps because those apps allowed users to create AI-generated nude or sexually explicit images of individuals without their consent.

Why “Free” Should Make Users More Careful, Not Less

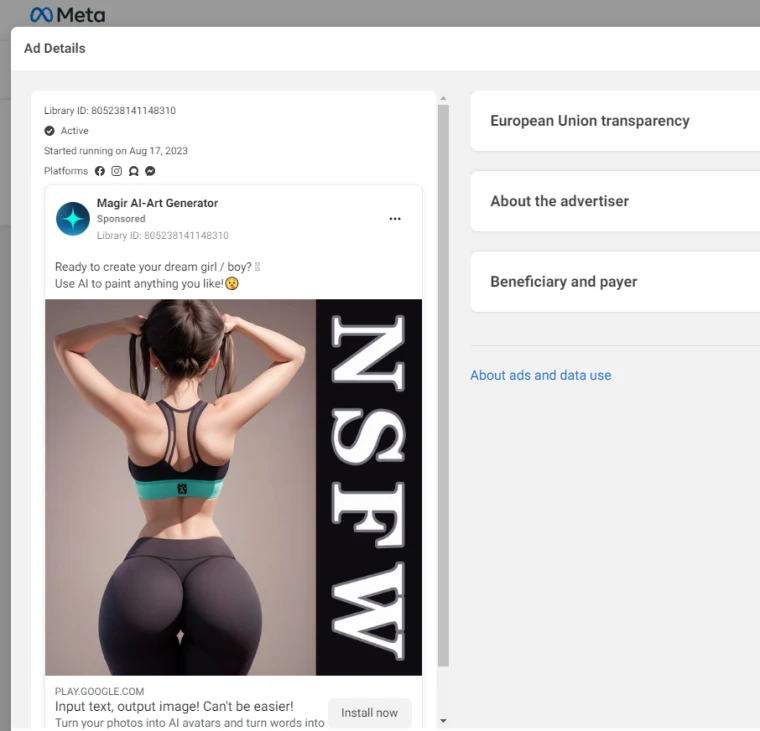

The word free sounds attractive, but in this category it should make users more cautious. A service offering synthetic sexual generation at no upfront cost still has to make money somehow, and that often raises questions about ads, upsells, data handling, or weak moderation. More importantly, many of the most aggressive products in this space operate outside mainstream app-store ecosystems. Google Play’s current policies say apps cannot contain or promote pornography, sexually gratifying content or services, sexually predatory behavior, or non-consensual sexual content. That means tools pushing furthest into sexualized AI are often more likely to appear in less transparent channels, where user protection may be weaker.

The Biggest Problem Is Consent

The central issue is not whether AI can generate explicit material. It is whether the people depicted, imitated, or targeted ever agreed to it. A so-called free AI porn generator can be used to create humiliating fake sexual imagery of classmates, coworkers, former partners, influencers, or strangers using nothing more than ordinary photos. That is why governments are no longer treating the topic as a niche internet curiosity. In the United States, the TAKE IT DOWN Act became law in May 2025. Official summaries say it targets non-consensual intimate imagery, including AI-generated deepfakes, and requires covered platforms to remove such content within 48 hours after valid notice from a victim.

Why Mainstream Platforms Are Tightening Their Response

Big platforms are under pressure because discovery is what turns harmful content into a viral problem. Google now provides removal pathways for personal sexual content and artificial imagery in Search, and in February 2026 it announced a simpler system for reporting non-consensual explicit images, including the ability to report multiple images at once and filter similar results. That matters because victims usually do not care first about the technical debate around AI. They care about getting the content taken down, delisted, or made harder to find before it spreads further.

The Child-Safety Dimension Makes This Much More Serious

There is also a child-protection issue that makes this topic impossible to treat as ordinary adult entertainment. The National Center for Missing & Exploited Children says generative AI is being used to create child sexual abuse material and fake nude imagery of children, including content linked to nudify-style apps. NCMEC warns that this synthetic abuse can cause severe emotional and psychological harm and highlights the broader risks of generative AI in sexual exploitation cases. Once that reality is part of the picture, the debate shifts from edgy technology to urgent online safety.

What Users and Businesses Should Actually Look For

From a practical point of view, the question should not be “Where can I find a free AI porn generator?” The smarter question is “What safeguards does any AI image service have?” Responsible systems should clearly disclose that users are interacting with AI, explain how uploads are handled, restrict impersonation, respond quickly to abuse reports, and make it easy to remove harmful material. Those expectations line up with the broader regulatory direction in Europe, where transparency for generative and deepfake systems is being treated as part of digital trust and user protection rather than a minor compliance detail.

Final Thoughts

From an SEO standpoint, free AI porn generator is a high-interest keyword. But the deeper story is not about finding the best free tool. It is about how synthetic sexual content collides with privacy, consent, abuse prevention, child safety, and platform accountability. The internet is moving toward tighter rules, faster takedowns, and greater scrutiny of tools that can generate intimate content without meaningful safeguards. In that environment, “free” is not the most important word in the search. “Safe” is.